In the past two years, AI has become a popular conversation starter, whether you’re chatting in a formal meeting or a casual setting. Today, nearly everyone has a sense of what AI is and what it can accomplish. Yet, understanding how AI truly works beneath the surface remains challenging or unfamiliar to most.

Let’s explore large language models, or LLMs, which are the technology powering AI as we know it.

Unless you’ve been out of the loop, you’ve likely encountered ChatGPT, Bard, Bing, or similar tools as your go-to resources for information and research. These technologies have changed how we gather information online.

For a long time, AI models were mainly confined to research. Then, in October 2022, ChatGPT’s popularity exploded, making AI accessible to the general public. But this isn’t the whole story — we’ve been using AI in many forms for years, sometimes without even noticing. Here are some well-known examples of AI in everyday life that didn’t get the same hype as ChatGPT:

- Google Assistant, Siri, and Amazon Alexa

- Spam filters in Gmail and other email services

- Personalized shopping suggestions on Amazon

- Spotify’s music recommendations

These AI applications are right before us but might not have received as much attention.

So, what sets tools like ChatGPT, Bard, and Bing Chat apart? The difference lies in their underlying models and the unique capabilities they offer. These text-based AIs are built on Large Language Models, or LLMs, which allow them to communicate (or write) in ways that feel natural to us. Let’s dive into what LLMs are and examine some of the leading models that have emerged recently.

What are Language Models?

Language Models are rooted in Natural Language Processing (NLP), a branch of AI that enables computers to interact with human language. A language model is a mathematical framework for understanding and generating language. These models process text or audio input, convert it into mathematical expressions, and then respond by producing sequences of words based on the patterns they detect.

Language models learn the structure of language from their inputs and predict the sequence of words that follow, allowing them to generate coherent sentences or anticipate the next word in a phrase.

Types of Language Models

Statistical Language Models

In 1948, Claude Shannon’s influential paper, “A Mathematical Theory of Communication,” introduced a probabilistic approach to language. Statistical language models use probabilities to determine the likelihood of a word appearing after another. By applying these probabilities to large datasets, early computers began understanding and constructing language to a certain degree.

Neural Network Language Models

As technology advanced, the limitations of statistical models led to the development of Neural Language Models in the 2000s. These models, inspired by deep learning, could use “learning mechanisms” instead of constant data input. The artificial neural networks powering them made it possible to capture complex patterns and dependencies in language, creating more nuanced text. Unlike their predecessors, these models could improve their performance over time by learning from previous outputs. However, neural models were computationally heavy due to their sequential processing nature, which slowed down training and required high resources.

Transformers

Google Brain revolutionized language models by introducing Transformers, outlined in their landmark paper, “Attention is All You Need.” Transformers leverage an “attention mechanism” that weighs the relevance of different parts of the input data, producing more contextually accurate language outputs. This approach improves coherence and makes training faster and less resource-intensive, forming the backbone of many large language models we rely on today.

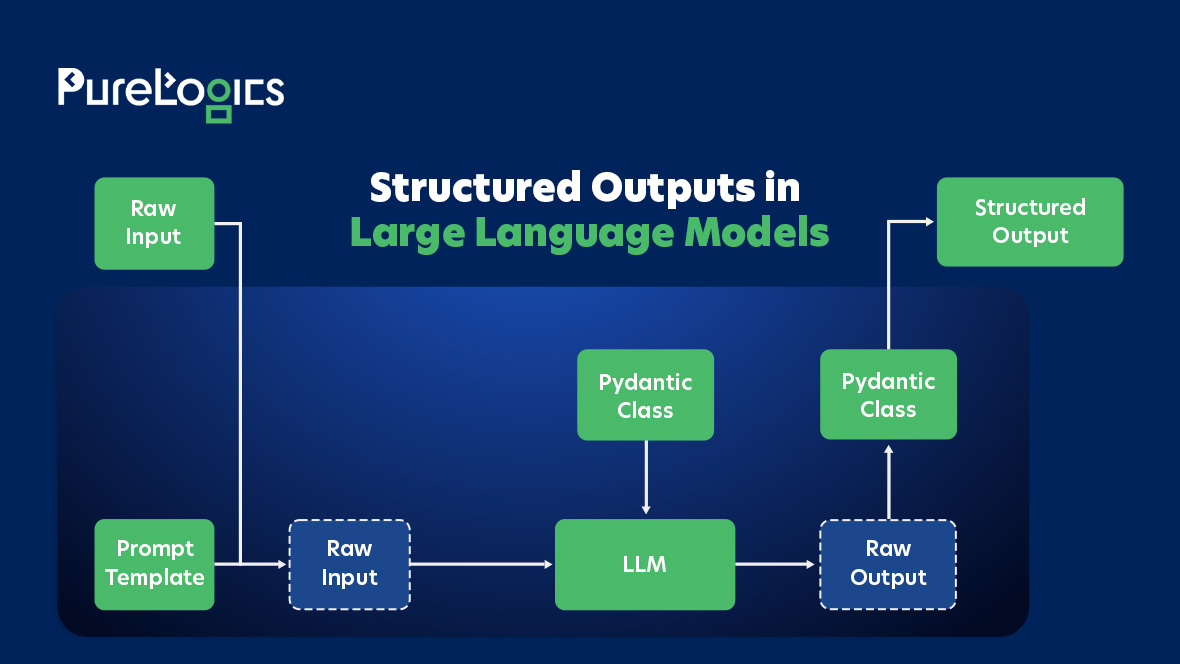

Benefits of Structured Outputs from LLMs

Structured outputs in Large Language Models (LLMs) enhance the precision and usability of AI-generated content. Here’s how they provide significant advantages:

Improved Accuracy and Consistency

Constraining outputs to a structured format promotes accuracy and consistency, ensuring the information is relevant and reliable. This approach minimizes the chances of the model introducing irrelevant or inconsistent data.

Enhanced Interpretability

Structured data simplifies interpretation, allowing for quick identification of key insights. This clarity makes information actionable and accessible, which is valuable for both human users and machine systems that rely on structured data.

Easier System Integration

Structured outputs are compatible with databases, APIs, and other software, easing integration. This approach reduces reliance on complex natural language processing to parse unstructured text, saving time and simplifying data handling.

Reduced Hallucinations and Irrelevant Information

Adhering to a structured output format reduces the likelihood of generating extraneous or inaccurate information. The model’s response remains focused, limiting instances of “hallucinations”—incorrect or made-up facts.

Streamlined Data Processing and Analysis

Structured responses are easier to process, analyze, and visualize. For instance, customer preferences gathered in a structured format enable rapid aggregation and analysis, improving data utility and supporting effective decision-making.

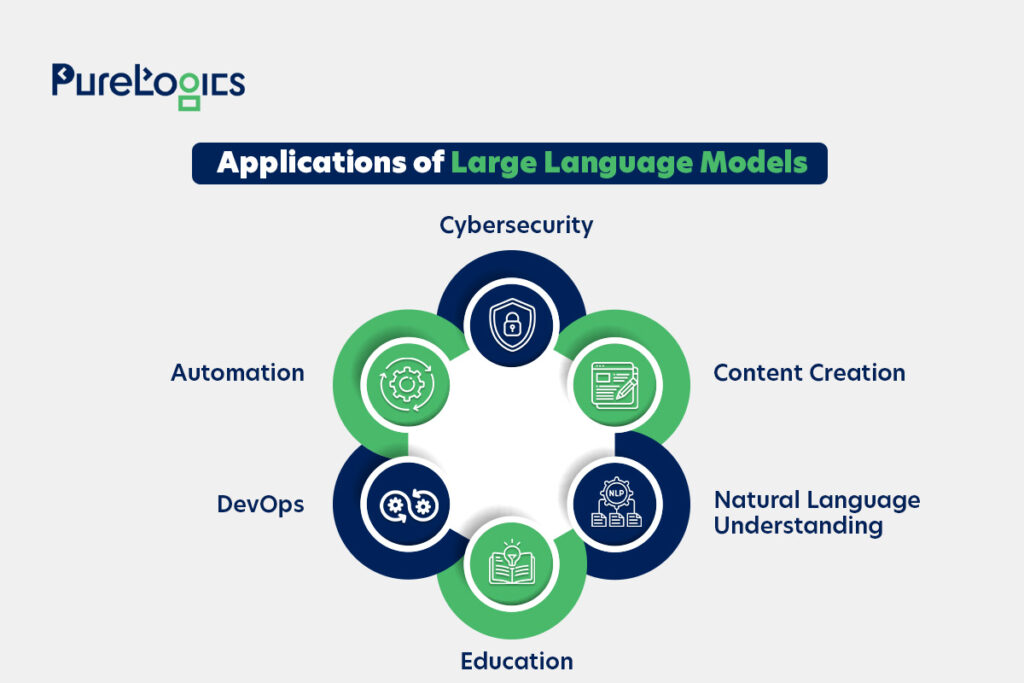

Applications of Large Language Models

Cybersecurity

With a high demand for skilled professionals, cybersecurity stands to benefit significantly from LLMs. These advanced AI tools can be trained to understand specific organizational contexts, allowing them to make precise, relevant predictions. When applied thoughtfully, AI can be transformative for essential use cases, such as:

- Threat Intelligence Analysis: LLM-enabled tools can use large datasets to detect patterns and produce actionable threat intelligence reports.

- Phishing Detection: By examining email content and metadata, AI tools can detect phishing attempts and help prevent email-based threats.

- Security Chatbots: Smart chatbots can support security teams by answering questions, aiding in incident analysis, and even automating responses to frequent security incidents. This automation helps manage the heavy load of alerts that security analysts face—often exceeding 10,000 daily.

Automation

AI is set to play a key role in automating workflows and processes, particularly in large enterprises handling extensive data streams and multiple endpoints.

DevOps

Code Review and Analysis: Tools like GitHub Co-Pilot and Cursor AI act as AI development companions, supporting developers in coding, reviewing, and analyzing. These tools identify potential bugs, flag security vulnerabilities, and suggest enhancements. They can even provide natural language explanations of complex code.

Automated Documentation Generation: Writing documentation is essential yet time-intensive. LLM tools, like ChatGPT, can streamline this by generating documentation from code and comments. Human review of the generated documentation is still recommended before distribution.

Education

AI has made strides in learning assistance. For example, Google’s Project Tailwind takes a novel approach to AI-assisted learning, though similar goals can be reached by using AI as a research companion to enhance learning efficiency. AI is also widely used in language learning, offering personalized guidance for acquiring new languages.

Natural Language Understanding

Sentiment Analysis: Beyond content generation, LLMs excel at identifying sentiment in text, such as emotion, tone, or quality. This capability has proven invaluable in helping writers refine their work, achieving objectivity without losing a personal touch.

Text Classification and Tagging: LLMs like ChatGPT can classify and tag text automatically, aiding data organization, content categorization, and spam detection.

Writing Assistant & Content Creation

Article and Blog Writing: ChatGPT and similar LLM-based tools have gained popularity for assisting writers—whether amateurs or professionals—in drafting articles, suggesting content ideas, or even writing entire blog posts.

Copywriting: Text-based tasks like copywriting have become a runaway success with LLMs, as they can perform core tasks efficiently and sometimes surpass human ability. Popular tools advancing this field include Jasper and CopyAI.

Unlock the power of structured AI outputs.

Transform your data with PureLogics’ expertise.

Future of AI & LLMs

Ethical Considerations

Misinformation and Deepfakes: LLMs’ ability to produce realistic text (and visuals) can be misused to spread misinformation or create deepfake content, making it challenging to differentiate AI-generated information from human-generated content.

Data Privacy: Data privacy remains a significant issue. For example, ChatGPT faced a temporary ban in Italy due to concerns over data privacy. Privacy concerns are inherent since LLMs often train on publicly available data, including personal or copyrighted material.

Current Limitations

Biases: AI systems can quickly develop biases due to the vast amount of data they process. LLMs are built on immense datasets, and the assumptions within this data can shape their responses, sometimes embedding biases. For instance, GPT-3 was designed to stay neutral, especially around sensitive political topics, but LLMs may still adopt stereotypes and social conventions.

Lack of Common Sense Reasoning: While models like GPT-4 and Bard are highly advanced, they still struggle with common sense reasoning. This limitation can lead to inaccurate answers and even fabrication. For example, early versions of Bard were described as having accuracy issues, occasionally being “worse than a pathological liar.”

Interpretability and Transparency: Companies like OpenAI and Google, being for-profit, are not obligated to disclose the mechanisms behind their models like PaLM or GPT. This results in LLMs often being “black boxes” for most users, though there are open-source alternatives like Hugging Face, which promote greater transparency by building models with community contributions.

Resource Intensive: Training LLMs demands extensive computational resources, which can have environmental impacts and makes these technologies accessible primarily to organizations with substantial financial backing.

Potential Advancements

Common-Sense Reasoning: In their current form, LLMs can lack common-sense reasoning, sometimes making their responses appear naive. Enhancing future models with this capability could unlock new applications and improve reliability.

Continual and Lifelong Learning: Currently, LLMs like ChatGPT are limited by a fixed training cut-off (e.g., 2021), making them ineffective for inquiries beyond that scope. Allowing LLMs to learn continuously from new data without extensive retraining could be revolutionary for AI.

Conclusion

Structured outputs from LLMs transform data handling and analysis by enabling consistent, accurate, and interpretable content production. With techniques like prompt engineering, function calling, and JSON schema enforcement, LLMs can deliver outputs ready for seamless integration into databases, APIs, and analytical tools. This approach mainly benefits high-precision fields such as finance, healthcare, and legal services.

As LLMs evolve, their ability to produce structured outputs will become crucial in automating tasks and enhancing efficiency across industries. Structured outputs expedite data analysis and integration, reducing the time and resources required for AI-driven implementations. By integrating structured data, businesses can drive innovation, streamline operations, and make informed decisions.

Unlock the potential of structured LLM outputs for your business needs. Contact PureLogics experts to transform your data management and analysis.

[tta_listen_btn]

[tta_listen_btn]

January 31 2025

January 31 2025